Consider the photo above. If you ever took Psych 101, it should be familiar. The year is 1951. The balding man on the right is psychologist, Solomon Asch. Gathered around the table are a bunch of undergraduates at Swarthmore College participating in a vision test.

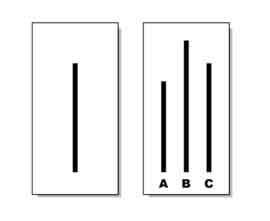

Briefly, the procedure began with a cardboard printout displaying three lines of varying length. A second containing a single line was then produced and participants asked to state out loud which it best matched. Try it for yourself:

Well, if you guessed “C,” you would have been the only one to do so, as all the other participants taking the test on that day chose “B.” As you may recall, Asch was not really assessing vision. He was investigating conformity. All the participants save one were in on the experiment, instructed to choose an obviously incorrect answer in twelve out of eighteen total trials.

The results?

On average, a third of the people in the experiment went along with the majority, with seventy-five percent conforming in at least one trial.

Today, practitioners face similar pressures—to go along with the assertion that some treatment approaches are more effective than others.

Regulatory bodies, including the Substance Abuse and Mental Health Services Administration in the United States, and the National Institute for Health and Care Excellence, are actually restricting services and limiting funding to approaches deemed “evidence based.” The impact on publicly funded mental health and substance abuse treatment is massive.

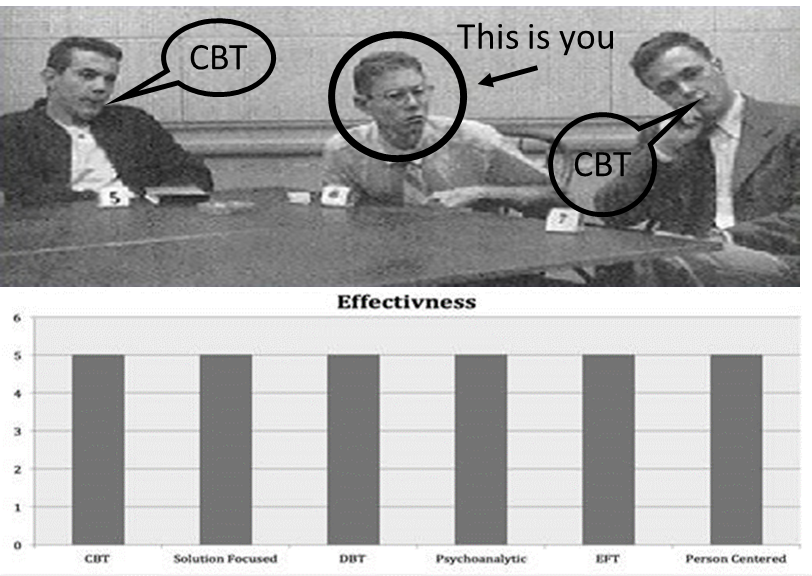

So, in the spirit of Solomon Asch, consider the lines below and indicate which treatment is most effective?

If your eyes tell you that the outcomes between competing therapeutic approaches appear similar, you are right. Indeed, one of the most robust findings in the research literature over the last 40 years is the lack of difference in outcome between psychotherapeutic approaches.

The key to changing matters is speaking up! In the original Asch experiments, for example, the addition of even one dissenting vote reduced conformity by 80%! And no, you don’t have to be a researcher to have an impact. On this score, when in a later study, a single dissenting voter wearing thick glasses—strongly suggestive of poor visual acuity—was added to the group, the likelihood of going along with the crowd was cut in half.

That said, knowing and understanding science does help. In the 1980’s, two researchers found that engineering, mathematics, and chemistry students conformed with the errant majority in only 1 out of 396 trials!

What does the research actually say about the effectiveness of competing treatment approaches?

You can find a review in the most current review of the research in the latest issue of Psychotherapy Research–the premier outlet for studies about psychotherapy. It’s just out and I’m pleased and honored to have been part of a dedicated and esteemed group scientists that are speaking up. In it, we review and redo several recent meta-analyses purporting to show that one particular method is more effective than all others. Can you guess which one?

The stakes are high, the consequences, serious. Existing guidelines and lists of approved therapies do not correctly represent existing research about “what works” in treatment. More, as I’ve blogged about before, they limit choice and effectiveness without improving outcome–and in certain cases, result in poorer results. As official definitions make clear, “evidence-based practice” is NOT about applying particular approaches to specific diagnoses, but rather “integrating the best available research with clinical expertise in the context of patient characteristics, culture, and preferences” (p. 273, APA, 2006).

Read it and speak up!

Until next time,

Scott

Scott D. Miller, Ph.D.

Director, International Center for Clinical Excellence

Hi Scott,

Thanks for introducing me to the ‘non reproducibility’ of early gold standards in psychology in some of your earlier posts, particularly the dubiousness of babies instinctually knowing how to imitate.

In my clinical work I have discarded some of my favoured therapeutic approaches, much to my ego-busting chagrin, via the ORS/ SRS Scores I have been using these past years.

I resisted the findings re no one approach being better than another.

I use Gold Standard CBT!, I outraged to myself.

I am effective at CBT and Emotion Driven Behaviour Change … because I am good at CBT and EDBC, that is all. No other reason, it works for my patients simply because I can effectively work with my patients in the mode I work best in, that is all. It’s not rocket surgery.

And when CBT based approaches are not effective, well, I’ll try something else.

Part of my contract with my patients is that they must tell me when I’m talking what I refer to as Hippy Bullshit, ie, what ain’t working for them.

Process questions, you know?

Of course you do.

My favourite story re the effectiveness of the SRS scale is of a very forceful male who rated what we were talking about in therapy on the SRS scale less and less each session.

I commented that all we did was listen to him talk, forcefully, of his current problem story each session.

“Yes” he said, “but that’s not what I want to talk about!”

I asked what he wanted to talk about, he told me, we worked on that, and our work progressed nicely.

Without the SRS scale he may have left therapy and I would not have known my error.

Be humble, Counsellor, be humble.

Well said, Luke.

While I agree with Scott’s comments, it is important readers read Scott’s work on Outcomes. This is a two step .

First step accepting that techniques are essentially equivalent.

Second step many seem to miss is the use of outcomes measures to ensure whatever technique in use is effective–or change focus/technique to something that does work .

Hi Scott, once again a very interesting review. It was so interesting to see Tourette mentioned in your study, since this is mostly my entire practice. You hit the nail on the head in your description of the greater nuance needed between treating symptoms and more overall factors related to clients’ distress.